A clear explanation of AI learning as a data-and-feedback process, including supervised learning, unsupervised learning, RLHF, fine-tuning, and synthetic data tradeoffs.

Why this matters now

As model performance gains from raw scaling slow down, data quality, synthetic data pipelines, and post-training alignment are central to how AI systems improve in 2026.

Key figures

- Enterprise deployment pressure is increasing demand for trustworthy training and evaluation data.

- Public trust and bias concerns remain high.

- Modern systems rely heavily on post-training alignment and instruction tuning, not just pretraining.

- Synthetic data is increasingly used to fill data gaps and simulate rare scenarios.

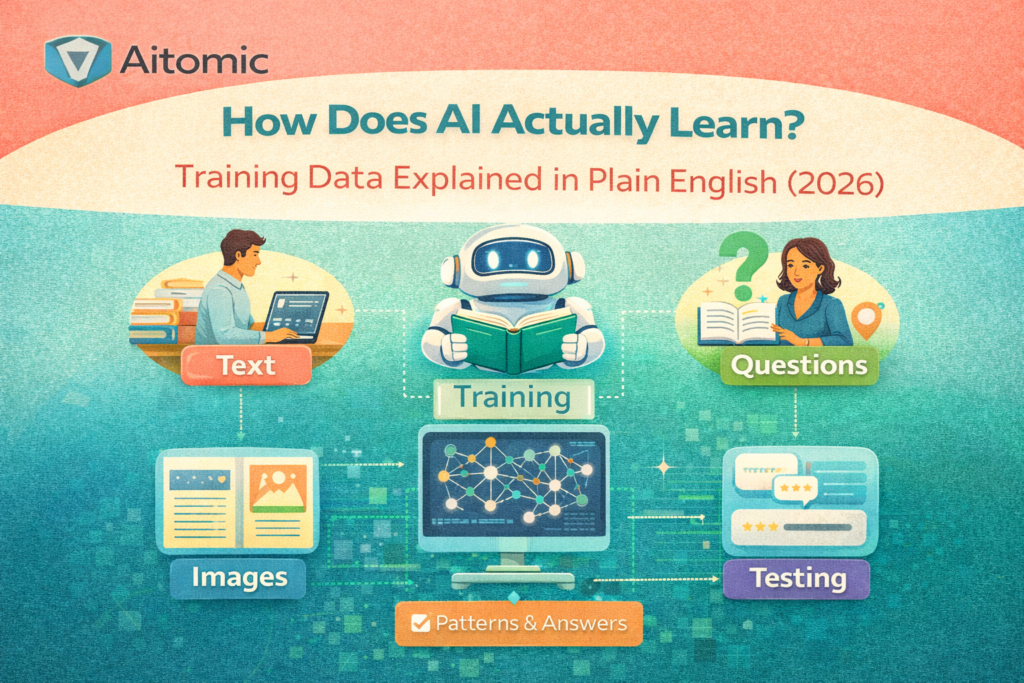

The data diet: AI becomes what it is trained on

AI learns from repeated exposure to data. High-quality, representative data generally improves model behavior; noisy or biased data degrades it.

In 2026, teams are asking not only which model to use, but what data shaped it and how it is aligned in production.

Many failures blamed on AI are actually data, labeling, or workflow failures.

Three ways AI learns: supervised, unsupervised, and feedback-driven

Supervised learning uses labeled examples and learns to predict labels.

Unsupervised learning discovers latent structure in unlabeled data.

Reinforcement and RLHF-style approaches use preference and reward signals to shape behavior.

- Supervised: learn correct patterns from labeled examples.

- Unsupervised: discover clusters, structure, or anomalies.

- RLHF/alignment: learn preferred responses from feedback and ranking.

RLHF in plain language: the thumbs up/thumbs down layer

Pretraining teaches broad language patterns; alignment teaches behavior for human-facing tasks.

Human preference data and evaluator rankings steer outputs toward useful and safer behavior.

This post-training layer explains why similarly sized models can feel different in quality and tone.

Garbage in, garbage out: how bias enters the system

Bias is often a data and objective-design problem, not a model personality issue.

If data underrepresents groups or embeds historical discrimination, the model can reproduce those patterns.

Bias mitigation needs domain expertise, measurement, and governance, not only engineering speed.

The synthetic data shift: benefits and risks

Synthetic data helps when real data is scarce, sensitive, or expensive.

Weak synthetic generation or weak validation can amplify errors and distort distributions.

Robust workflows keep synthetic pipelines grounded with human-reviewed truth and benchmark testing.

Fine-tuning and specialization

Fine-tuning adapts general models for specific domains like legal, support, or internal policy.

It can increase relevance and consistency, but poor data can overfit behavior.

Often, teams should compare fine-tuning against retrieval and workflow design improvements first.