A beginner-friendly but technically honest explainer of the predictive architecture behind LLMs such as ChatGPT, Gemini, and Claude.

Why this matters now

LLMs are now embedded in writing tools, coding assistants, enterprise copilots, search-style interfaces, and agent workflows. In 2026, understanding their predictive architecture helps teams use them better and trust them less blindly.

Key figures

- Scale trend: Modern LLMs are trained with billions (and in some systems far more) parameters.

- Enterprise AI exposure: 78% of organizations report AI use in at least one function (Stanford HAI AI Index 2025).

- Public trust tension: Many Americans remain more concerned than excited about AI (Pew Research, 2025).

- Operational takeaway: LLM fluency increases adoption and over-trust risk at the same time.

The prediction game: what an LLM is actually doing under the hood

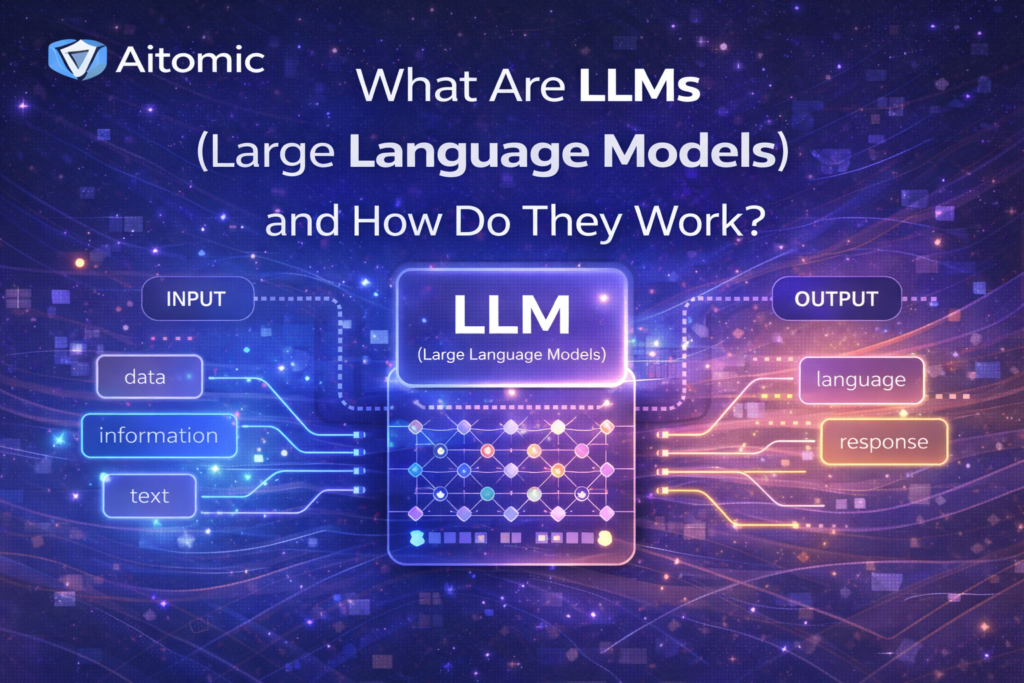

At its core, an LLM plays a prediction game: next-token prediction. Given a sequence of tokens, it estimates what token is most likely to come next.

At large scale, repeated prediction is powerful enough to produce drafting, summarization, translation, coding help, and reasoning-style outputs that feel conversational.

LLMs are predictive language engines, not digital minds. They are statistically strong at sequence completion, not conscious thinkers.

The large in LLM: what billions of parameters really means

Parameters are adjustable weights learned during training. A practical analogy is billions of tiny knobs tuning pattern associations.

Size alone does not guarantee better outputs. Data quality, training process, alignment, evaluation, and deployment design matter as much.

In 2026, many teams compare large general-purpose models with smaller, specialized models (SLMs) for narrow tasks.

Transformer architecture in plain English: why self-attention matters

Transformers improved language modeling by evaluating relationships across a sequence more effectively than older strictly sequential approaches.

Self-attention lets the model weigh which words matter most when interpreting each token in context.

This helps explain stronger summarization, rewriting, and code completion behavior in modern LLMs.

Tokens vs words: how AI reads text and code

LLMs process tokens, not words exactly as humans do. Tokens can be words, subwords, punctuation, or code symbols.

Token limits are practical limits on context. Long prompts consume context budget quickly.

Clear structure, constraints, and examples often improve output quality because they shape token sequences more predictably.

SLMs vs LLMs in 2026: why smaller models are part of the story

Many teams do not need the biggest model for every task. They need speed, lower cost, governance, and reliability in a narrow domain.

Smaller or domain-tuned models can reduce latency and cost while improving controllability in targeted workflows.

Large models still matter for broad generalization and complex multimodal use cases.

The honest analysis: the stochastic parrot critique

The stochastic parrot critique highlights a real risk: fluent language can be mistaken for true understanding.

LLMs can help communication tasks while still failing on grounded logic, consequences, or hidden assumptions.

Best practice is to use LLMs as powerful synthesis systems with grounding, retrieval, and human accountability.